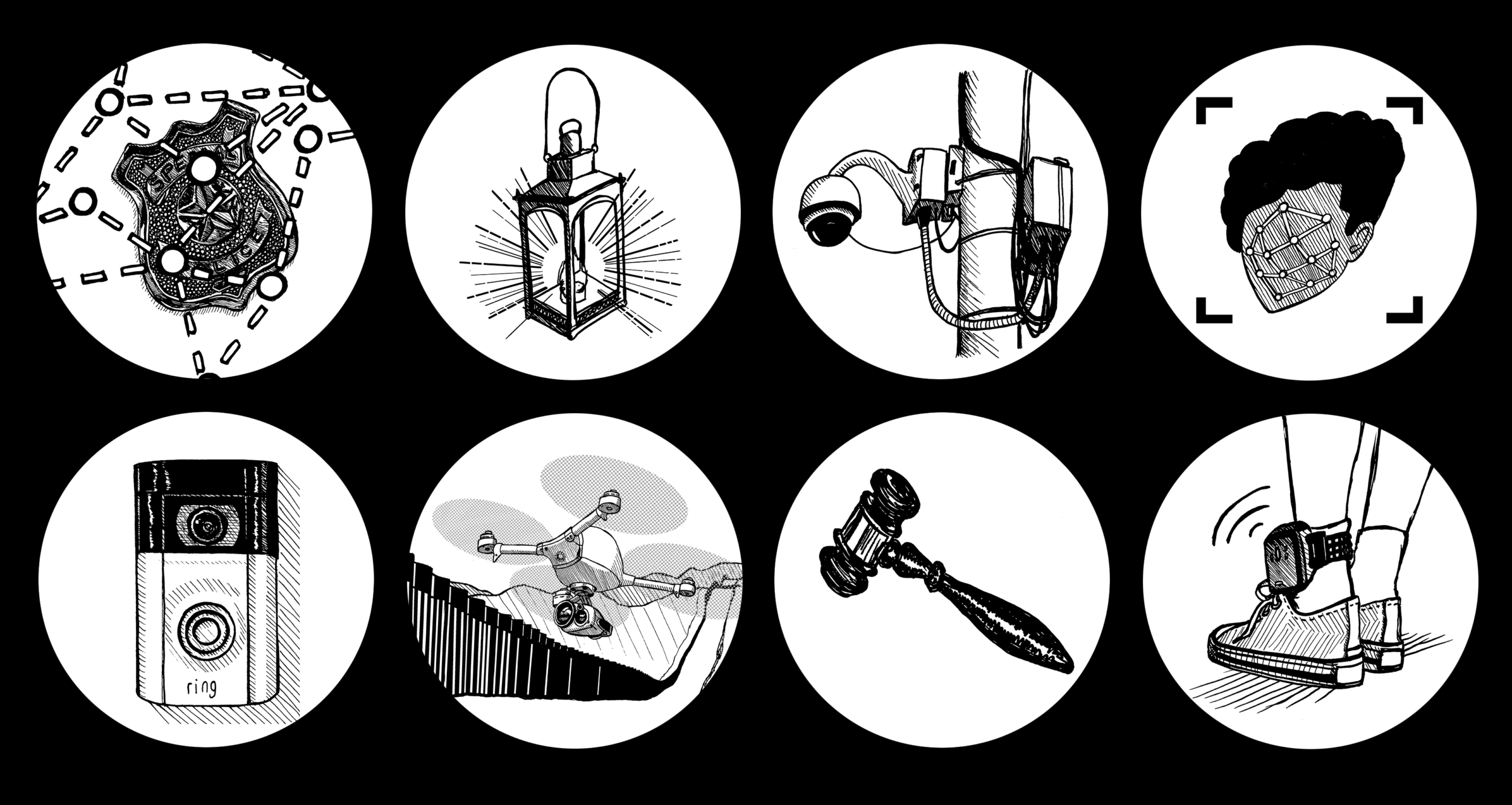

Today, incarcerated people in some facilities are being forced to wear hard plastic bracelets that transmit their live biometric data to the state. At the same time, algorithm-driven technologies are being used to predict who might commit a crime, to detain immigrants and identify sites for ICE raids, and to jail Black people misidentified by facial recognition systems.

It has become increasingly clear that we need a term for the insidious ways artificial intelligence is being used to criminalize society’s most vulnerable, while surveilling the rest of us on a daily basis—and generating billions of dollars in the process. Carceral AI, a term coined by researcher Dasha Pruss and a team of interdisciplinary scholars, captures this phenomenon. Pruss’s research serves as both the inspiration for, and first installment in, our own Carceral AI series, which will address an array of topics ranging from AI-driven facial (mis)recognition to efforts to use AI to justify the revival of so-called “predictive” policing.

The series will continue through 2026. To make sure you never miss an installment, sign up for our weekly newsletter.

Carceral AI is here. It’s time to fight back.

Facial recognition is just the tip of the iceberg. Today, AI is being used to monitor social media, track ICE targets, and classify swaths of the population as “future” criminals.

Dasha Pruss

Read More

Tech Won’t Fix Eyewitness Identification

Eyewitness identification is a deeply flawed practice. Adding facial recognition technology, with its veneer of objectivity, only worsens the crisis of mass incarceration.

Will Collins

Read More

Turning Death into a Commodity

ShotSpotter has leveraged gun violence into a multimillion-dollar business that promises safety but delivers only increased policing and drain on the public’s resources.

Ed Vogel

Read More

Safety from Surveillance

In their fight to get ShotSpotter out of Chicago, organizers have emphasized the ways that for-profit technology can never deliver on its promises to make communities safer.

Ed Vogel & Sharah Hutson

Read More

Borders and Bytes

So-called “smart” borders are just more sophisticated sites of racialized surveillance and violence. We need abolitionist tools to counter them.

Ruha Benjamin

Read More

Precrime Is No Longer Science Fiction

Biometric technologies are increasingly using facial expressions, eye movements, voice patterns, and more to predict whether someone has or will commit a crime.

Sarah Fathallah

Read More

Art: Dasha Pruss